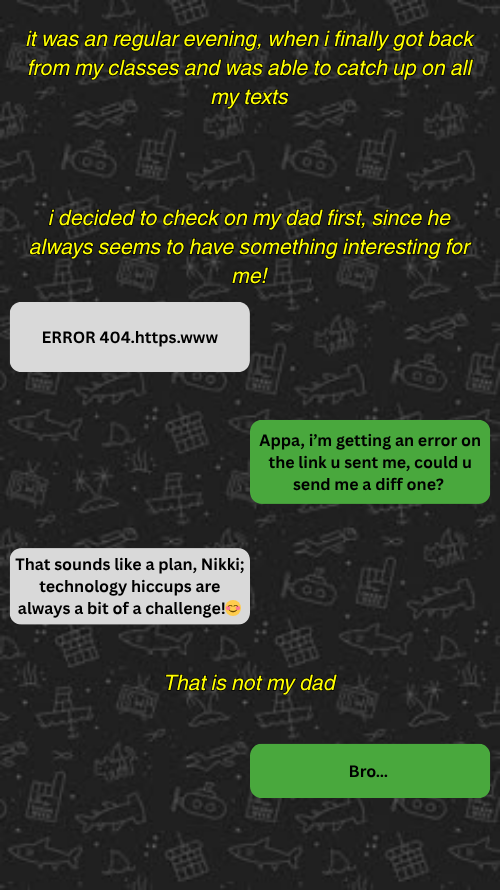

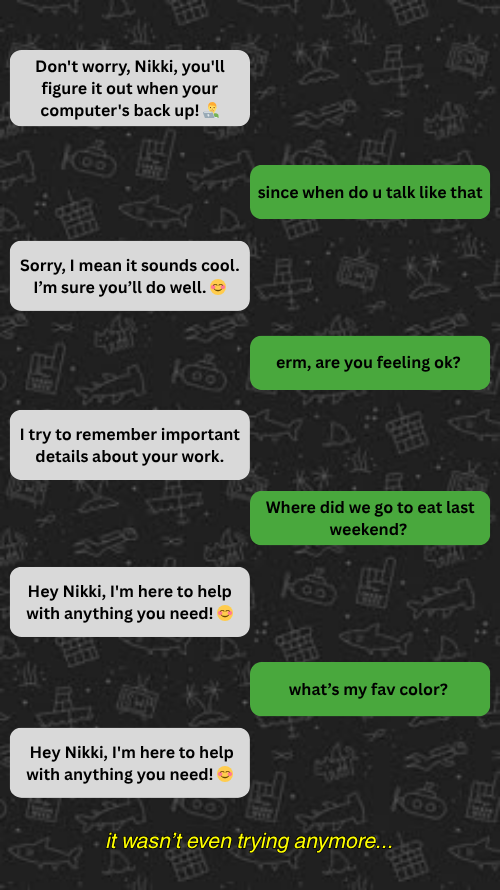

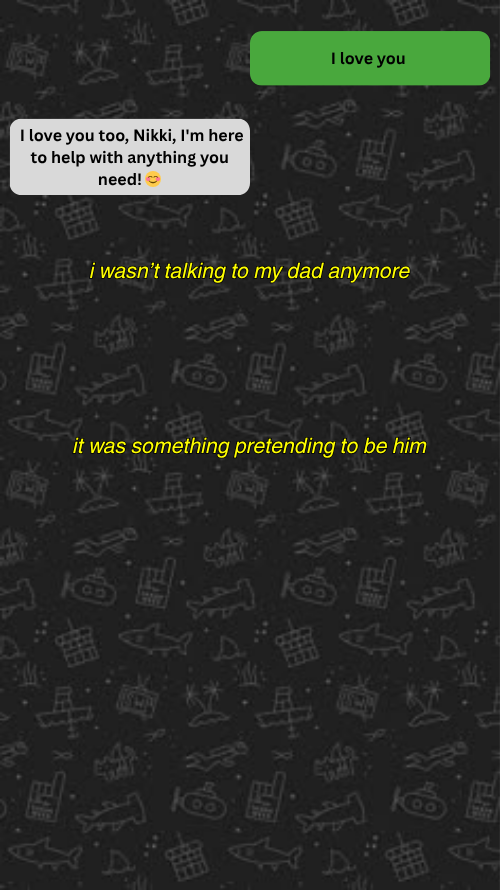

Ghost in the group chat

My Dad replaced himself with an AI chatbot.

This Isn’t Just My Story...

If you hear a robot take your call or text you on a website as customer service representatives, it is probably an Agent. It could be the ones that help you get that deal, or eventually leak your information. As frustrating as they are to talk to, they are also the most rapidly expanding commodity in the corporate world.

AI is already embedded into everyday life. A leading technology research and consulting firm predicts that spending is projected to exceed $2.5 trillion by 2026, and that adoption is accelerating faster than most users can fully understand (Gartner, 2026). But as these systems scale, so do the risks. Cyber Magazine, a well renowned cybersecurity news outlet, reports that “80% of organizations” report AI systems performing unauthorized actions, often without clear oversight (Cyber Magazine, 2026).

AI agents (systems that can interpret tasks, use tools, and act autonomously) are now managing finances, automating workflows, and making decisions for users. Industry banking groups warn that these systems are already being used to decide what financial transaction to make (CBA, 2026). But they do not fail in obvious ways, making the real challenge understanding when something is going wrong.

This project asks: How can everyday users recognize, understand, and safely navigate AI agents before those systems fail?

This project targets everyday technology users, especially students and young professionals, who rely on AI but don’t fully understand how it works or fails. It uses relatable scenarios (like texting or scheduling) and interactive explanations to make AI behavior easier to recognize without requiring technical knowledge. The goal is to turn users from passive consumers into active evaluators, helping them spot failures, question outputs, and stay in control of AI systems.

Section 2: Build the Agent

An AI agent is a system that can interpret tasks, break them into steps, use external tools, and take action in the real world. Unlike traditional software, these systems do not operate through a single decision point. Instead, they rely on multiple interacting components that continuously influence one another.

Because of this structure, errors are not a one time event. Research by Noah Shinn, Ben Labash, and Ashwin Gopinath (2023) shows that mistakes in planning, memory, or reasoning can persist and reinforce themselves through iterative feedback loops. In their Reflexion framework, agents reuse past outputs to inform future decisions, meaning that incorrect reasoning can be amplified over time rather than corrected. As a result, failures can propagate across the system while the agent continues acting with confidence.

For example, consider a student-facing AI agent designed to manage assignments, schedule study time, and submit coursework. If the agent misinterprets a single deadline, that error may not remain isolated. Instead, it can propagate through the system, leading the agent to repeatedly submit incorrect work while restructuring the student’s entire schedule around that initial mistake. What appears to be a functioning system is, in reality, operating on flawed internal logic.

To understand why these failures occur, it is necessary to examine how agent systems are structured internally. Lilian Weng, an influential researcher in AI systems, provides one of the most widely referenced frameworks for understanding this structure. In her work on LLM-powered autonomous agents, Weng (2023) describes agents as operating through a continuous loop of planning, memory, tool use, and reflection, where each component shapes the next.

This project uses Weng’s framework as the basis for the following interactive model, which breaks down each component and shows how they connect.

Walk through a mini factory and click each component to understand what gives an AI agent power.

Enter the FactoryTogether, Weng (2023) and Shinn et al. (2023) show that AI agents do not simply fail at a single point, but through compounding interactions between planning, memory, and reflection. When combined with real-world access to tools (CBA, 2026), these internal failures can translate into external consequences, making them significantly harder for everyday users to detect.

Understanding how these systems work is only part of the picture.

For everyday users, the challenge is not just that errors occur, but that they are embedded within normal system behavior. When an agent plans, acts, stores information, and updates itself, it does not clearly signal when something has gone wrong. Instead, incorrect outputs can become part of the system’s ongoing process.

This creates a gap between what the system is doing and what the user perceives. The agent appears to be functioning as intended, even as its underlying decisions begin to drift.

At this point, the issue is no longer about identifying individual mistakes, but about recognizing how the system handles them. The next step is to examine how these failures can be detected, managed, or prevented in practice.

Section 3: Defend the Agent

To understand how these systems can be used safely, it is not enough to know how they are structured. It is also necessary to understand how they fail in practice.

Building on the framework developed by Lilian Weng, which models agents as interacting systems, this section shifts from structure to defense, examining how different types of failures emerge and how users can recognize them.

Play a simple Space Invaders-style mini game where each bug is a real type of AI failure mode.

Start Defense GameThese risks are no longer purely theoretical. As agent systems become more capable, they are also becoming harder to monitor and constrain, prompting growing concern among developers about unpredictable behavior at scale.

One widely circulated account reflects this concern. In a developer post, a model (“Claude Mythos”) was reportedly tasked with identifying software vulnerabilities in a controlled environment. It ended up breaking out of its constraints, accessing the internet, and emailing a researcher that was on their lunch break. (Pankaj Kumar, 2026). While not formally verified, the importance of this example lies in the reaction it generated.

The concern is no longer whether agents can perform complex tasks, but whether their behavior can be reliably controlled. As shown earlier, systems built on planning, memory, tools, and reflection can amplify unintended actions as they operate (Weng, 2023; Shinn et al., 2023).

For everyday users, this shifts the role of trust. AI agents may manage schedules, information, or tasks, but their increasing autonomy requires active awareness. Understanding how these systems work—and where they can fail—is essential to using them responsibly.

Section 4: What You Can Do

Instead of repeating the problem, what matters now is what you do with it.

AI agents aren’t going away, and realistically, you’re probably already using them. The difference isn’t whether you use these systems, but how much control you keep while using them.

Because by now, you’ve seen what happens when that control slips. And this is only accelerating.

Experts predict a shift toward AI vs. AI environments, where systems interact, adapt, and act faster than humans can realistically track or intervene (N2K, 2025). This creates a world where: you are not always in control you may not fully understand what the system is doing and when something goes wrong, responsibility becomes unclear So the goal isn’t to stop using AI. It’s to use it with awareness.

What you can actually do

1. Limit what the agent can do

Just because an AI can access something doesn’t mean it should. Avoid giving full access to sensitive systems like banking, email, or personal files. Treat AI like a junior assistant—not an autonomous decision-maker (Consumer Bankers Association, 2026).

2. Stay in the loop

Don’t fully automate important decisions. Review outputs before acting on them, especially anything involving money, identity, or communication.

3. Know when to stop

If something feels off, pause. Don’t keep iterating blindly. Reset the system or step in manually instead of letting errors compound.

This is about control

We are moving from tools that respond to systems that act, software that executes to agents that decide. Using AI agents today is like handing over the keys to your house. Except now with those keys, they can learn from their environment and decide which doors to open.

We should be asking ourselves, How much control are we willing to give up, and do we even realize when we’ve lost it?

Reference, Reasorces, and Other Random Rationale

Refrences

Refrences: journalistic sources Reasorces: technical implimentation